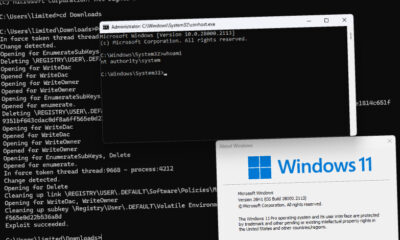

On Tuesday, Anthropic confirmed that internal source code for its Claude Code artificial intelligence assistant was inadvertently released due to a human error in its packaging process. The incident, which involved code being published via the npm software registry, did not expose sensitive customer data or credentials, according to the company.

Details of the Incident

An Anthropic spokesperson stated the leak was a “release packaging issue caused by human error, not a security” breach. The exposed material consisted of proprietary internal code related to Claude Code, an AI tool designed to assist software developers. The code was made accessible through npm, a widely used repository for JavaScript software packages, before being swiftly removed.

The company emphasized that no user data, account information, or system credentials were compromised in the event. This distinction is critical for users and enterprise clients who rely on the platform for coding tasks. Anthropic’s immediate response involved securing the leaked code and initiating an internal review of its software deployment procedures.

Context and Industry Reaction

This incident occurs amidst heightened scrutiny of AI safety and security practices across the technology sector. Anthropic, a leading AI research and safety company, is known for its focus on developing reliable and controllable AI systems. Claude Code is part of its suite of AI assistant products competing in a rapidly growing market for developer tools.

Security experts note that while the exposure of internal source code is a serious operational lapse, the absence of customer data exposure significantly reduces the immediate risk to users. However, such leaks can potentially expose software architecture, proprietary algorithms, or other intellectual property that competitors might analyze.

The npm registry, operated by GitHub, is a central hub for open-source and private software packages used by millions of developers. Incidents involving unintended publication on such platforms highlight the challenges companies face in managing complex software supply chains and deployment pipelines.

Broader Implications for AI security

The event underscores the operational security challenges facing AI companies as they scale their development and release processes. As AI models and their supporting infrastructure become more complex, the potential for human error in deployment increases. This incident serves as a reminder for all software firms to audit their continuous integration and delivery (CI/CD) systems and access controls.

Anthropic’s prompt confirmation of the issue aligns with best practices for incident transparency, aiming to maintain trust with its developer community and enterprise clients. The company’s statement was shared directly with news outlets, including CNBC, providing a clear factual account.

Next Steps and Resolution

Anthropic is expected to complete its internal investigation into the root cause of the packaging error. The company will likely implement additional safeguards or automated checks to prevent similar incidents in the future. Standard industry response includes reviewing access logs to confirm the scope of the exposure and notifying any regulatory bodies if required.

The focus now shifts to the company’s ability to reinforce its software development lifecycle security. Observers will monitor for any follow-up statements detailing specific corrective actions. For users of Claude Code, the company has indicated service continues normally, with no required action on their part.

Source: CNBC