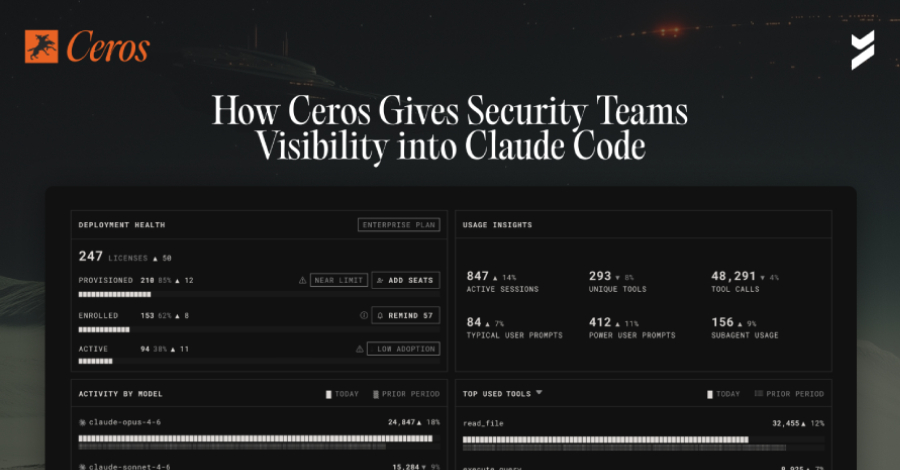

A new security platform has been introduced to manage the access and activities of artificial intelligence coding agents within corporate technology environments. The platform, developed by Ceros, is designed to give security teams oversight and control over tools like Anthropic’s Claude Code, which are increasingly used by software engineers.

The Rise of Non-Human Actors

For years, enterprise security strategies have focused on managing human users and traditional service accounts. These systems govern identity verification and access permissions for employees and automated processes. However, a new type of actor, the AI coding agent, now operates in many companies without being governed by these established security controls.

Claude Code, an AI assistant created by Anthropic, is one such agent being deployed at scale across engineering departments. It is capable of reading source code files, executing commands in system shells, and making calls to external application programming interfaces (APIs). These actions, while productive, occur outside the visibility of traditional security tools built for human-centric workflows.

Addressing the Security Gap

The Ceros platform aims to close this security gap. It provides a centralized system where security administrators can monitor the actions of AI agents. The tool allows teams to see what files an agent accesses, what commands it runs, and what external services it communicates with.

Furthermore, the platform enables the implementation of guardrails and policy controls specifically for these non-human actors. Security policies can be set to restrict an AI agent’s access to sensitive data repositories or to prevent it from running certain high-risk commands. This provides a layer of governance similar to what exists for human engineers.

Broader Industry Implications

The move highlights a growing recognition within the cybersecurity industry. As generative AI and autonomous agents become integrated into standard development workflows, they introduce new attack surfaces and compliance challenges. These agents can potentially expose proprietary code, mishandle customer data, or be manipulated to perform malicious actions if not properly supervised.

Industry analysts note that the proliferation of AI coding assistants is forcing a reevaluation of enterprise security architectures. Tools designed for the previous era of software development are often ill-equipped to handle the unique behaviors and access patterns of AI-powered tools.

Looking Ahead

The release of specialized security tools for AI agents is expected to accelerate as adoption grows. Other cybersecurity firms are likely to develop similar monitoring and control capabilities for a variety of AI models and automation platforms. The focus will remain on providing visibility without hindering the productivity gains that these AI tools offer to development teams. Ongoing development will also need to address the evolving capabilities of the AI agents themselves, ensuring security controls remain effective as the technology advances.

Source: Adapted from provided content