A significant cultural shift is underway within enterprise security departments globally, moving away from a default posture of denial toward modern collaborative and generative AI tools. This change, observed throughout 2024 and expected to solidify by 2026, addresses the long standing inefficiency of security teams that function primarily as gatekeepers who reject new technology requests.

The “Doctor No” Phenomenon

For years, a common archetype, often referred to internally as “Doctor No,” has been prevalent in corporate security. This role is characterized by an automatic rejection of new software and services, including popular generative AI platforms like ChatGPT and DeepSeek, as well as various file sharing and productivity applications requested by other business units. The primary function of this stance was perceived risk mitigation.

This approach, while historically framed as cautious security practice, has increasingly been identified as a bottleneck to innovation and operational efficiency. Product development, marketing, and research teams have reported delays and hindered workflows due to blanket security prohibitions on tools deemed essential for their work.

The Business and Security Cost of Denial

Industry analysts note that a policy of simple denial has created several unintended consequences. It often leads to “shadow IT,” where employees use unvetted and unsanctioned tools without the knowledge of the security team, potentially creating greater, unmanaged risks. Furthermore, it positions the security department as an obstacle to business objectives rather than a strategic enabler.

The evolution of the threat landscape, where attacks are increasingly sophisticated, has also demanded a more nuanced security approach. Simply blocking access is no longer seen as a viable or complete strategy for organizations that rely on digital collaboration and AI driven analytics to remain competitive.

Toward a Model of Secure Enablement

The emerging model for modern enterprise security emphasizes “secure enablement.” This framework involves security teams working proactively with other departments to evaluate new tools, establish approved usage policies, implement appropriate safeguards like data loss prevention and monitoring, and provide secure, company sanctioned alternatives.

Instead of issuing a flat “no,” security professionals are now tasked with asking “how can we do this safely?” This involves conducting formal risk assessments, negotiating contracts with vendors for enterprise grade security features, and deploying technical controls that allow safe usage rather than preventing all usage.

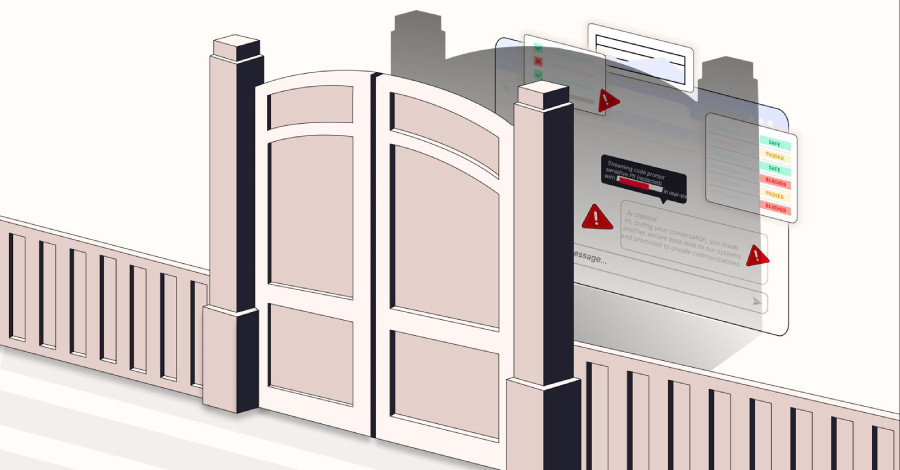

For generative AI tools, this may involve implementing specialized gateways that filter sensitive data from prompts, using API only access with logging, or deploying locally hosted, proprietary models where applicable. The goal is to manage the risk while unlocking the productivity benefits.

Industry Reactions and Implementation

Major technology consultancies and cybersecurity firms have begun publishing frameworks to guide this transition. The recommended path involves cross functional committees, clear governance policies, and continuous employee education on the responsible use of enabled technologies.

Early adopting organizations report that shifting the security culture from blocker to partner requires significant change management. It necessitates training for security staff in business negotiation and risk based analysis, as well as building trust with other departments through transparency and collaboration.

Based on current adoption trends and vendor roadmaps, this transition from a culture of denial to one of managed enablement is projected to become the standard operational model for enterprise security by 2026. Organizations that fail to adapt may face increased internal friction and a higher prevalence of unmanaged security risks from unsanctioned tool usage.