A security vulnerability in Anthropic’s Claude browser extension for Google Chrome could have been exploited to silently inject malicious prompts into the AI assistant by simply visiting a compromised website. The flaw, which required no user interaction, was disclosed by cybersecurity researchers this week, highlighting a significant risk to users of the popular AI tool.

Details of the Security Vulnerability

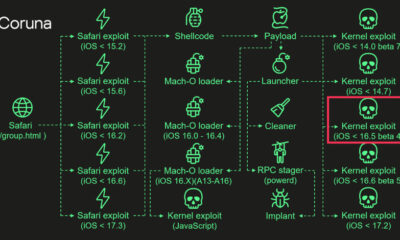

According to a report shared by Koi Security researcher Oren Yomtov, the flaw in the Claude extension allowed any website to inject prompts into the AI assistant as if the user had written them. This type of attack, known as a zero-click or drive-by exploit, means a user could trigger a malicious prompt without clicking on any element, merely by loading a web page containing the exploit code.

The vulnerability is classified as a Cross-Site Scripting (XSS) prompt injection attack. It bypasses normal security controls by manipulating the extension’s functionality to accept and execute unauthorized commands. This could allow an attacker to make the AI assistant perform unauthorized actions, potentially leading to data theft, account compromise, or the spread of misinformation through the user’s account.

Researcher Findings and Disclosure

Oren Yomtov detailed the technical mechanism of the attack in his report. The exploit leveraged the extension’s architecture to pass malicious prompt data from a visited website directly into the Claude chat interface. Because the extension treated this injected text as user input, it would be processed by Anthropic’s AI models without any security warning or user consent.

The researcher responsibly disclosed the vulnerability to Anthropic, the company behind the Claude AI assistant. Following standard security practice, the details were made public after the company had time to develop and deploy a fix to its user base, preventing active exploitation from the report’s publication.

Anthropic’s Response and Patch

Upon receiving the disclosure, Anthropic’s security team developed a patch for the Claude Chrome extension. The update addresses the underlying code that allowed the silent prompt injection, closing the security gap. The company has rolled out the fixed version through the Chrome Web Store, and users are advised to ensure their extensions are updated to the latest version automatically or manually.

Anthropic has not reported any evidence that this vulnerability was exploited in the wild before the patch was issued. The company emphasized its commitment to user security and its bug bounty program, which encourages external researchers to report potential issues.

Broader Implications for AI security

This incident underscores the evolving security challenges presented by AI-powered browser extensions. As these tools gain deeper integration into web browsers and access to sensitive user data, they become attractive targets for cybercriminals. A zero-click vulnerability is particularly severe as it removes the need for social engineering or user error, making attacks easier to scale and harder to detect.

Security experts note that the integration of large language models (LLMs) into everyday applications introduces new attack vectors, such as prompt injection, which traditional software security models are still adapting to address. This case demonstrates how a standard web vulnerability like XSS can have novel and dangerous consequences when combined with an AI interface.

Recommendations for Users and Developers

Users of the Claude extension, or any AI-powered browser tool, should verify that their software is updated. Keeping browser extensions updated is a critical security habit. Users should also be cautious about the permissions granted to extensions and review them periodically.

For developers, this event highlights the importance of rigorous security testing for extensions, especially those that handle AI prompts and have access to web page content. Implementing strict content security policies, sanitizing all inputs, and conducting regular security audits are essential steps to prevent similar vulnerabilities.

The patching of this flaw is a proactive step, but the security community anticipates continued scrutiny on AI assistant integrations. Researchers and developers are expected to focus more on the unique threat models posed by prompt injection and other AI-specific attacks as these technologies become more ubiquitous.

Source: The Hacker News