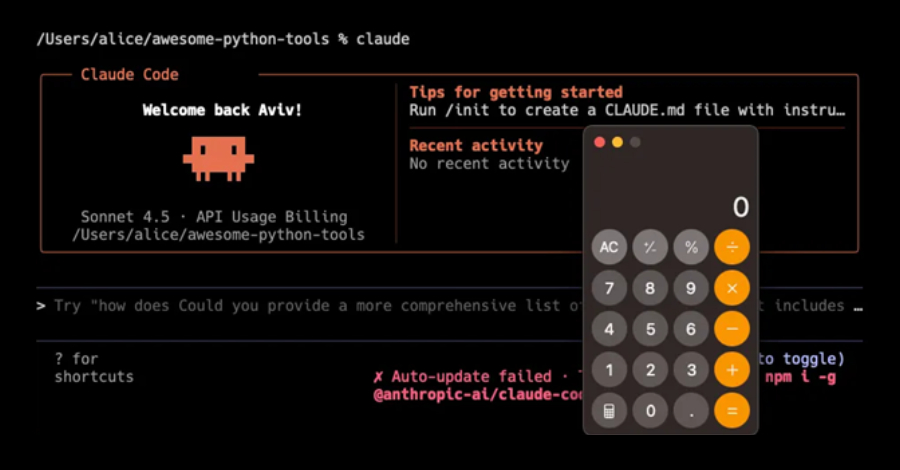

cybersecurity researchers have disclosed multiple security vulnerabilities in Anthropic’s Claude Code, an artificial intelligence powered coding assistant. These flaws could allow attackers to execute malicious code remotely and steal sensitive API credentials.

The disclosure was made public this week, though specific dates for the discovery or reporting were not provided. The vulnerabilities pose a significant risk to developers and organizations using the AI tool for coding assistance, as they could lead to complete system compromise and data theft.

Exploitation Through Configuration Mechanisms

The security weaknesses exploit various configuration mechanisms within the Claude Code environment. According to researchers, the attack vectors include Hooks, the Model Context Protocol servers, and environment variables.

By manipulating these components, a threat actor could achieve remote code execution. This would grant them the ability to run arbitrary commands on a victim’s system. The vulnerabilities also create a pathway for exfiltrating API keys and other authentication tokens.

Such credentials could then be used to access other services or proprietary code repositories. The exact technical methodology of the exploits has been detailed in a security advisory.

Scope and Potential Impact

Claude Code is designed to help developers write, explain, and debug software. It integrates directly into integrated development environments and code editors. This deep integration is what makes the security flaws particularly concerning.

A successful attack would not be limited to the AI assistant itself. It could potentially affect the entire development workstation and connected networks. The theft of API keys could have cascading security implications for an organization’s entire software supply chain.

Anthropic, the company behind Claude Code, is known for its focus on AI safety and alignment. This disclosure highlights the complex security challenges facing AI-powered developer tools as they become more deeply embedded in workflows.

Response and Mitigation

Researchers have coordinated the disclosure with Anthropic. The standard process for responsible vulnerability disclosure involves notifying the vendor first and allowing time for a patch to be developed before making details public.

Users of Claude Code are advised to monitor official channels from Anthropic for security updates and patches. Immediate best practices include reviewing and securing configuration settings related to Hooks and MCP servers.

Organizations should also audit environment variables used in development environments where Claude Code is active. Rotating any API keys that may have been exposed is a recommended precautionary step.

The cybersecurity community emphasizes that this case underscores the importance of applying security principles to emerging AI tools. These tools, while powerful, introduce new attack surfaces that must be rigorously assessed.

Broader Implications for AI Security

This incident follows a growing trend of security research focused on AI and machine learning systems. As these systems gain capabilities to interact with external tools and data, their potential vulnerability footprint expands.

The Model Context Protocol, specifically mentioned in the disclosure, is a framework that allows AI models to connect with external data sources and tools. Securing such protocols is critical for the safe adoption of AI assistants in professional and technical settings.

Security analysts note that the integration of large language models into developer tools requires a new security paradigm. Traditional application security models may not fully address the unique risks posed by AI agents with execution capabilities.

Anthropic is expected to release a detailed security advisory and software updates to address the identified flaws. The company’s response and the effectiveness of the patches will be closely watched by the security and developer communities.

Further technical analysis of the vulnerabilities is likely to be published by the research team in the coming weeks. This will provide a clearer understanding of the exploit chain and help other AI tool developers harden their own systems.

Source: GeekWire