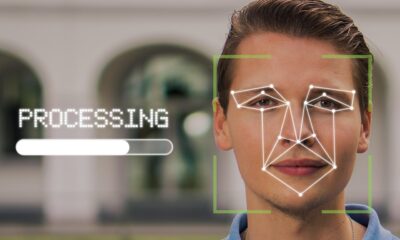

YouTube has expanded access to its artificial intelligence tool designed to detect synthetic content, commonly known as deepfakes. The platform is now making this technology available to politicians, government officials, and journalists, allowing them to request the removal of videos that feature their likeness without consent. This move aims to address the growing threat of manipulated media that could mislead the public or damage reputations.

How the New Process Works

The initiative provides a formal channel for eligible individuals to report AI-generated content that simulates their face or voice. Upon receiving a valid request, YouTube will review the content against its existing policies on synthetic media. If a video violates these rules, which prohibit misleading deepfakes that could cause serious harm, the platform will take it down. The tool is an extension of a privacy request process, not a separate policy.

YouTube’s existing policies already ban synthetic content that is not clearly labeled, especially when it depicts realistic violence, promotes scams, or features the likeness of identifiable individuals for endorsements. The new process specifically empowers public figures to act against unauthorized and harmful impersonations more directly. The company has not disclosed the exact technical methods the AI detection tool uses to identify synthetic media.

Context and Industry Pressure

This expansion occurs amid increasing global concern over the potential for AI-generated content to disrupt democratic processes and spread misinformation. Other major technology companies, including Meta and TikTok, have implemented similar policies requiring labels on AI-generated content. Legislative bodies in several regions are also drafting laws to regulate deepfakes, particularly those targeting political candidates.

YouTube’s decision focuses on individuals who are at a higher risk of being targeted by malicious deepfakes due to their public roles. The company stated that protecting the integrity of information, especially during critical events like elections, is a priority. This step is part of a broader suite of measures YouTube has rolled out to combat misinformation and manipulated media on its platform.

Limitations and Challenges

While the new access provides a tool for public figures, challenges remain. The system relies on individuals proactively identifying and reporting content, which may not capture all violations. Furthermore, the evolving sophistication of AI generation tools continues to pose a significant detection challenge for automated systems. Experts note that a multi-faceted approach, combining technology, policy, and user education, is necessary to effectively mitigate the risks of synthetic media.

The policy also navigates complex areas of parody and satire, which may be protected under various legal frameworks. YouTube has indicated that its review process will consider context, including whether the content is presented as artistic or humorous, when evaluating removal requests. Balancing enforcement with freedom of expression remains a key consideration for the platform.

Expected Next Steps

YouTube plans to monitor the usage and effectiveness of this expanded reporting tool closely. The company may adjust the eligibility criteria or the review process based on feedback and emerging trends. As AI technology advances, further updates to the platform’s synthetic media policies and detection capabilities are anticipated. Industry observers expect other social media platforms to refine their own deepfake mitigation strategies in response to technological and regulatory developments.

Source: Various industry reports