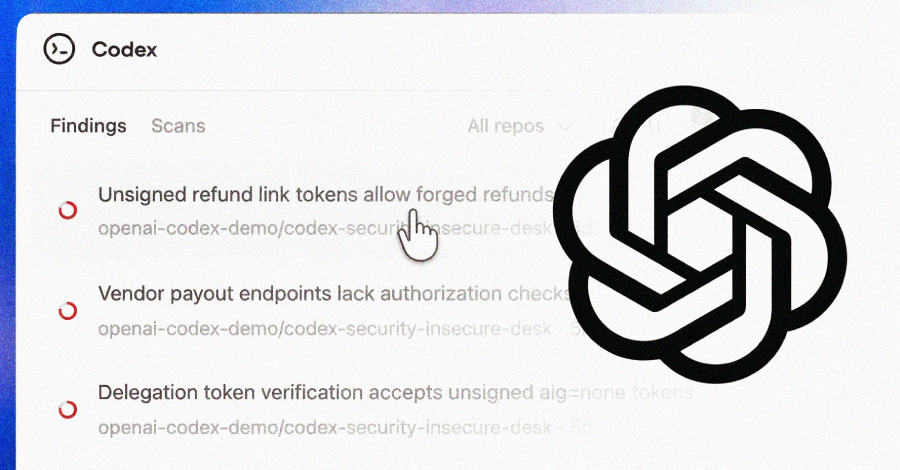

OpenAI has launched a new artificial intelligence-powered security agent designed to identify and help fix vulnerabilities in code. The tool, called Codex Security, began rolling out to select customers on Friday.

The system is available in a research preview to users of ChatGPT Pro, Enterprise, Business, and Edu tiers. Access is provided through the Codex web interface, with OpenAI offering free usage for the initial month. The company stated the agent is engineered to find, validate, and propose fixes for security flaws.

Scope and Scale of Initial Findings

In its development and testing phase, Codex Security analyzed a substantial volume of code commits. The AI agent scanned approximately 1.2 million commits, according to information from OpenAI. From this analysis, it identified a total of 10,561 vulnerabilities classified as high-severity issues.

This large-scale scan provides an early indicator of the tool’s potential capacity to process vast amounts of code. The findings suggest a significant number of high-priority security problems may exist in active development projects.

Technical Function and Project Context

OpenAI describes Codex Security as building “deep context” about a software project to perform its analysis. This approach implies the AI goes beyond simple pattern matching. It likely attempts to understand the project’s structure, dependencies, and intended behavior to more accurately spot genuine security risks rather than false positives.

The technology aims to serve as an automated security assistant for developers. By integrating into the development workflow, it seeks to identify vulnerabilities earlier in the software development lifecycle. The goal is to reduce the time and expertise required for manual security audits.

Availability and Access Model

The current release is framed as a research preview, indicating it is not yet a fully finalized product. Availability is limited to paying tiers of ChatGPT’s offerings for organizations and educational institutions. The one-month free usage period allows these early adopters to evaluate the tool’s effectiveness without immediate cost.

This staged rollout is common for new AI features from the company. It allows OpenAI to gather real-world data, monitor performance, and make adjustments before a potential wider release. The focus on business and education customers first aligns with targeting environments where Code Security is a critical concern.

Industry Context and Development

The introduction of Codex Security enters a competitive market for application security testing. Numerous established companies offer static application security testing (SAST) and software composition analysis (SCA) tools. OpenAI’s entry is distinguished by its foundation in large language models and its integration within the broader ChatGPT ecosystem.

AI-powered code analysis has been a growing trend, with several startups and large technology firms investing in similar capabilities. These tools promise to augment human developers by automatically reviewing code for common mistakes, insecure configurations, and known vulnerability patterns.

Future Developments and Next Steps

Following the research preview period, OpenAI is expected to evaluate feedback and usage data from the initial customer group. The company will likely assess the accuracy of the vulnerability findings, the usefulness of the proposed fixes, and the tool’s overall performance in diverse development environments.

Based on this evaluation, OpenAI may refine the AI models, adjust the feature set, or modify the access policy. A decision on general availability, broader pricing tiers, or integration into other OpenAI platforms could follow. The company’s next official announcements regarding Codex Security will likely detail the results of the preview and outline a roadmap for its future development.

Source: OpenAI